on November 9 | in All, Technology | by Doriane Mouret

Have you ever heard about GeoEye? GeoEye is one of the satellite imagery providers of Google Maps and Google Earth. Its satellite, GeoEye-1, makes 15 orbits a day and takes pictures of 700,000 square kilometers of earth, an area about the size of Texas. There are 12 imaging satellites like GeoEye-1 orbiting around the earth. It’s of course nothing compared to the 16,976 satellites currently in our skies, but it’s enough to provide our web mapping applications with recent pictures of any single part of the planet.

It’s the Google team who first started to make those images available to the general public with the introduction of Google Map in 2005. Two years later, Google Street View launched, letting Google Map users virtually get around real places thanks to millions of pictures taken by the Google Cars. Microsoft caught up with the introduction of Street Slide, enhancing the experience by adding 3D street views.

A week ago, Google went even further by integrating pictures of public “interiors” in their maps. Not only does Google now let you view streets, it also takes you into stores, malls, parks, etc. The project is limited to specific countries and businesses for now, but should expand in the near future. Thus, you will soon be able to visualize any public area of the planet on your phone, and will be able to visit the entire world without leaving your apartment. It’s amazing what technology can let us do.

It’s maybe amazing, but it’s not true. Again, think out of the box: most of the pictures available on those applications are one to three years old! The world you visit on your Google/Bing Map application is the world of 2008, not the real time one. So how could we make this experience more accurate? By a combination of two genius inventions: Crowd-sourcing and Augmented-Reality.

Crowd-sourcing and Augmented-Reality Maps

First things first, what do we call Augmented-Reality? Augmented-Reality was first defined in 1997 by Ronald Azuma as a technique that “allows the user to see the real world, with virtual objects superimposed upon or composited with the real world.” In other words, Augmented-reality adds graphics to the natural world as it exists.

When applied to mapping technologies, Augmented-Reality can be seen as a technique to display information about the users’ surroundings in a mobile camera view: you point your camera in a certain direction, and the software will analyze the environment and add geo-located elements in the landscape like directions, altitude, temperature, distance to destination, or even pictures from other users taken at a different time. This technique can also be applied to pictures and maps that are not necessarily in real time. For example, to make our Google Street Views more accurate, we could let people add more recent pictures to the map as an Augmented-Reality feature.

When applied to mapping technologies, Augmented-Reality can be seen as a technique to display information about the users’ surroundings in a mobile camera view: you point your camera in a certain direction, and the software will analyze the environment and add geo-located elements in the landscape like directions, altitude, temperature, distance to destination, or even pictures from other users taken at a different time. This technique can also be applied to pictures and maps that are not necessarily in real time. For example, to make our Google Street Views more accurate, we could let people add more recent pictures to the map as an Augmented-Reality feature.

That’s what the Microsoft Bing Map team has been working on since 2009. Last year during a TED Conference, Blaise Aguera y Arcas, Bing Map Architect, presented the Augmented-Reality Maps project developed by his team. As you’ll see in the video below, Bing Map will soon let you add virtual elements to your street views, from Flickr pictures to real time video. Check it out, it’s pretty amazing.

Unless….. Unless we combine Augmented Reality Maps with Crowd-Sourcing! What is Crowd-Sourcing? Crowd-Sourcing is “the act of sourcing tasks traditionally performed by specific individuals to a group of people through an open call.” Basically, Crowd-sourcing is when you let the users of your products share information about it with other users. Your costumer becomes your provider. The best example of Crowd-Sourcing is of course Wikipedia, an Encyclopedia where the user not only consumes information, but also creates it.

Thanks to Augmented-Reality and Crowd-Sourcing, we could create virtual maps that are very close to real-time realities, making the experience of getting around a city more accurate and closer to what it really is. You just built your house and it doesn’t show up on Google or Bing Map yet? Then just take a picture of your house from the street, add it to the map and that’s it! Now your house is in the landscape and when people will virtually visit the city, they will see it.

And there is more! We could imagine Augmented-Reality Maps where a business owner could add a graphic to his store indicating that there is a promotion on a specific item. We could also add pictures of Foursquare mayors to each place… The possibilities are infinite. One of them is very cool: it’s called “Augmented Reality Cinema” and it lets you watch a movie in real life. You point your camera to a specific direction, the software analyzes the landscape, find a movie that has the exact same background and starts playing the scene. It’s like a real life movie theater, check it out:

Next Step: The Matrix

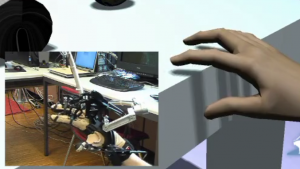

Each year, we push the limits of Augmented-Reality even further. Now not only can you “see” virtual elements integrated into the real world, but you can also smell them, hear them and even touch them. The imitation of the sense of touch is the most impressive one, and it’s called virtual haptics. Virtual haptics reproduce the feeling of touching an object by creating pressure on the user’s hands, usually through wired gloves. Coupled with Augmented-Reality, virtual haptics let you see and touch an object that actually does not exist.

Each year, we push the limits of Augmented-Reality even further. Now not only can you “see” virtual elements integrated into the real world, but you can also smell them, hear them and even touch them. The imitation of the sense of touch is the most impressive one, and it’s called virtual haptics. Virtual haptics reproduce the feeling of touching an object by creating pressure on the user’s hands, usually through wired gloves. Coupled with Augmented-Reality, virtual haptics let you see and touch an object that actually does not exist.

Let’s say you see a table on your screen and you hold out your hand to touch it. You will have the sensation that you really touch it and grab it, when there is actually no table at all in the room!

So now the question is: what if we used virtual haptics on Augmented-Reality Maps? We could reproduce the feeling of actually walking in the street, touching walls, entering stores, etc. We could recreate a world that is exactly like ours and get around it without actually moving from our place… Doesn’t it remind you of something? A movie released at the end of the 1990’s? They called it “The Matrix“, remember?

Don’t panic! Virtual worlds like that are still a fantasy that is far from being realistically implementable. But it’s interesting to know that we currently have the technologies that could make it technically possible. Now we just need to prevent artificial intelligence robots from getting control of those technologies. Fortunately AI robots don’t exist yet… or maybe they do?

» All, Technology » They Call It “The Matrix”,...

« 3 New Social Networks You Will Hear About Soon Sheryl Sandberg, 2012 Man of the Year »